Rav Rommel Banaag – Software QA Engineer

Lead QA Engineer | Test Strategy & Automation | Building QA Foundations for Startups | ISTQB® Certified

I’m a Software QA Engineer with 8+ years of experience ensuring high-quality releases across web and mobile applications. I specialize in building QA foundations from scratch, designing test strategies, and implementing scalable automation frameworks for startups and growth-stage teams. My focus is simple: ship fast, reduce risk, and protect user trust.

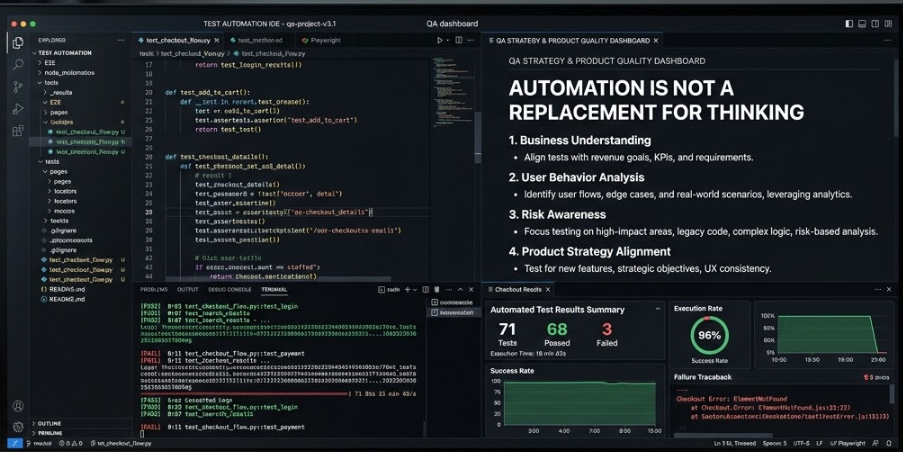

How I Decide What to Automate in Testing

Test automation is one of the most misunderstood topics in software quality engineering. Many teams think success means automating everything. In real production environments, that approach often creates more maintenance work without significantly improving product stability. As a software QA engineer, my philosophy is simple. I do not aim to automate everything. Instead, I automate strategically. Test automation should help teams release faster, detect regression risks early, and improve confidence in production deployments. Automation is a tool — not a replacement for engineering judgment.

Test Automation Is About Quality Confidence, Not Coverage

Many teams chase high automation percentage.

But in real projects, quality is not measured by how many tests are automated.

I focus on quality confidence.

The main question I ask is:

👉 Does this automation help protect the product from regression risk?

👉 Does it help developers get faster feedback?

If the answer is no, automation is probably not necessary.

1. Who gets affected if this fails?

Features that directly affect users, revenue, or compliance require strict verification. Authentication logic, transaction processing, and personal data handling are high-priority.

2. How often does this logic change?

Modules under active development have higher regression risk. I usually automate regression coverage around unstable components.

3. Is this feature new or complex?

New features and complex business rules carry uncertainty. I focus on boundary testing, integration flows, and state transitions.

4. Does this feature touch revenue, users, or compliance?

Anything involving payments, customer data, or regulatory requirements receives deeper testing coverage.

I Focus Automation on Critical User Flows

Critical user flows are the backbone of product value. These are paths where failure directly affects users or business operations.

Authentication and login systems

Payment and checkout processes

Account and profile management

Core transaction workflows

Data submission and storage validation

Why This Matters

Business systems must remain stable across updates. Automating critical flows ensures that core functionality is continuously verified during development cycles.

Why Exhaustive Testing Is Not Practical

High-Risk Logic Is a Primary Automation Target

Examples include:

Financial computation modules

Security validation rules

Data transformation layers

Integration boundaries between services

I evaluate risk using a simple mindset:

If failure impact is high, automation becomes more valuable. Even if a bug is unlikely, the consequence of failure may justify test automation investment.

Repetitive Testing Tasks Should Be Automated

Humans are not efficient at repetitive validation. If a scenario must be executed every release, it is a strong candidate for automation. Common repetitive tests include:

Form field validation rules

Search and filtering behavior

Multi-step workflow consistency checks

Cross-browser smoke testing

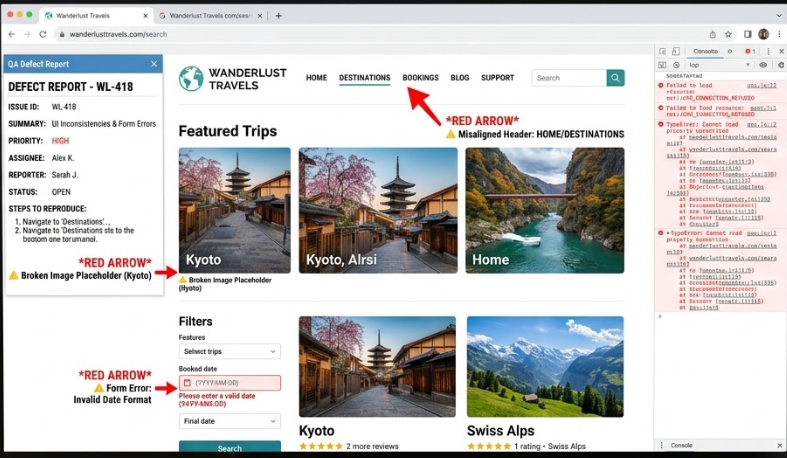

When Manual Testing Is Still Better

Software teams must release features quickly without compromising reliability. I usually structure testing work into three layers:

Fast Confidence Checks

These are lightweight validations before release, including login flow checks, core transactions, and basic API responses.

Regression Protection Layer

Automated test suites protect existing functionality and prevent unintended changes.

Exploratory Testing

Human-driven exploration helps discover unexpected behavior in real-world usage scenarios. Exploratory testing is not random — it is guided by product understanding and risk awareness.

User Experience Evaluation

Automation cannot judge:

Visual design quality

Usability clarity

Emotional user response

Accessibility perception

Human insight is necessary.

Exploratory Testing

Instead of following scripts, QA engineers:

Observe system behavior

Simulate real user thinking

Try unexpected input patterns

Many serious production bugs are found this way.

Rapidly Changing Features

I wait until:

Business rules are finalized

UI structure is stable

Feature behavior is consistent

Automation Maintenance Cost Matters

It requires:

Refactoring

Dependency updates

Selector stability management

Environment compatibility checks

They reduce team trust in automation.

Stable

Predictable

Easy to maintain

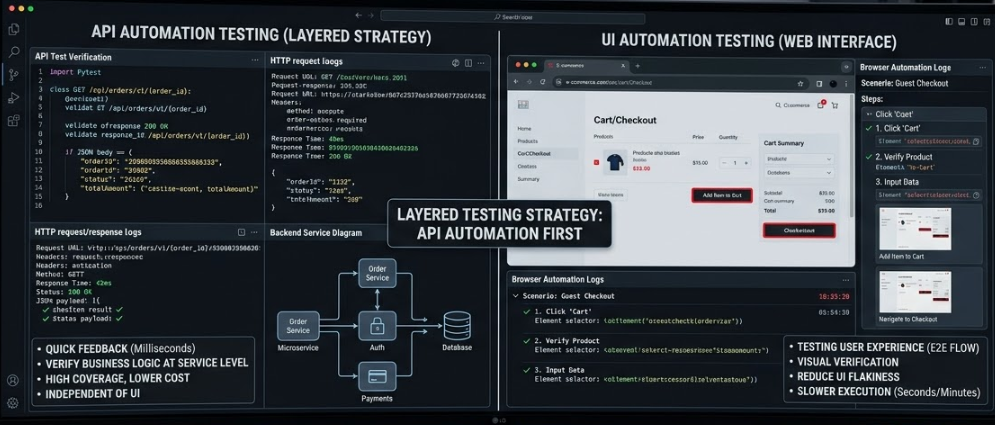

UI Automation vs API Automation

I follow a layered testing strategy.

API Automation First

API testing is faster and more reliable.

Quick execution feedback

Lower UI flakiness risk

Strong business logic verification

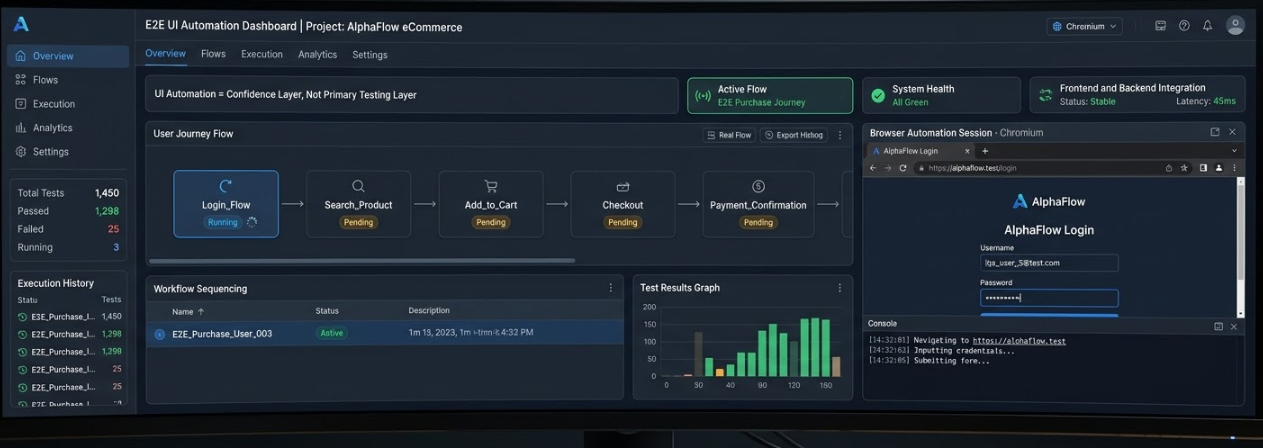

UI Automation for End-to-End Confidence

UI automation is useful for validating:

User journey flow

Frontend and backend integration

Workflow sequencing

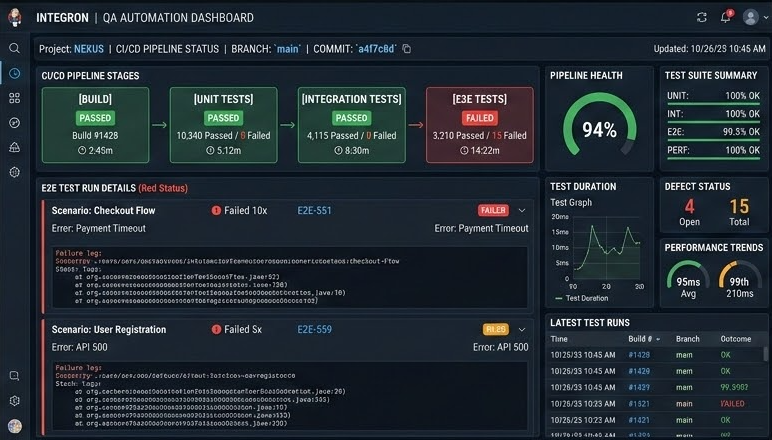

CI/CD Integration Is Essential

Good CI/CD automation should:

Run reliably in headless mode

Provide fast pass/fail signals

Finish execution quickly

Fail early when critical errors appear

Automation Is Not a Replacement for Thinking

This is my core engineering belief.

But software quality also depends on:

Business understanding

User behavior analysis

Risk awareness

Product strategy alignment

Automation should amplify QA intelligence, not reduce it.

My Practical Rule for Deciding What to Automate

I use this decision checklist:

✔ Is the feature critical to business value?

✔ Does it have high regression risk?

✔ Will it be tested repeatedly?

✔ Does automation improve feedback speed?

✔ Is the behavior stable enough for automation?

If not, I keep testing manual or postpone automation.

Conclusion

I do not aim to automate everything. I automate strategically. Test automation should protect critical functionality, accelerate feedback, and increase release confidence. The best automation strategy is not the one with the most scripts. It is the one that helps teams deliver reliable software faster while keeping maintenance effort sustainable. Quality assurance is not about finding every bug. It is about ensuring the product works where it matters most. Automation is simply a powerful tool to achieve that goal.